An LLM (Large Language Model) is software that has been trained to understand and generate text similar to the way a human being would.

It is like a virtual assistant capable of understanding natural language and responding coherently. Open AI’s now well-known ChatGPT and Google’s Bard are LLMs.

The magic of an LLM lies in the training; this process involves exposure to huge amounts of text from books, articles, websites and more. The LLM learns the rules of language, the relationships between words, and even the nuances of meaning. A key aspect of an LLM is his ability to understand context. It does not just recognize individual words, but analyzes the entire sentence to make coherent sense of the answer. This skill is crucial to ensure relevant and comprehensible answers. An LLM can produce articles, answer questions or even write stories using the information learned during training, the model creates sequences of words that make sense within context.

To fully understand how an LLM recognizes words, it is essential to know the training process.

Training and tokenization

During this phase, the model is exposed to a vast amount of text from books, articles, websites and more. This training allows it to learn word relationships, sentence meanings, and grammatical structures. Once the text is loaded, it is broken down into “tokens.” A token can be a word, a punctuation mark or even a letter. This subdivision allows the model to analyze the text in small units, making information processing more efficient.

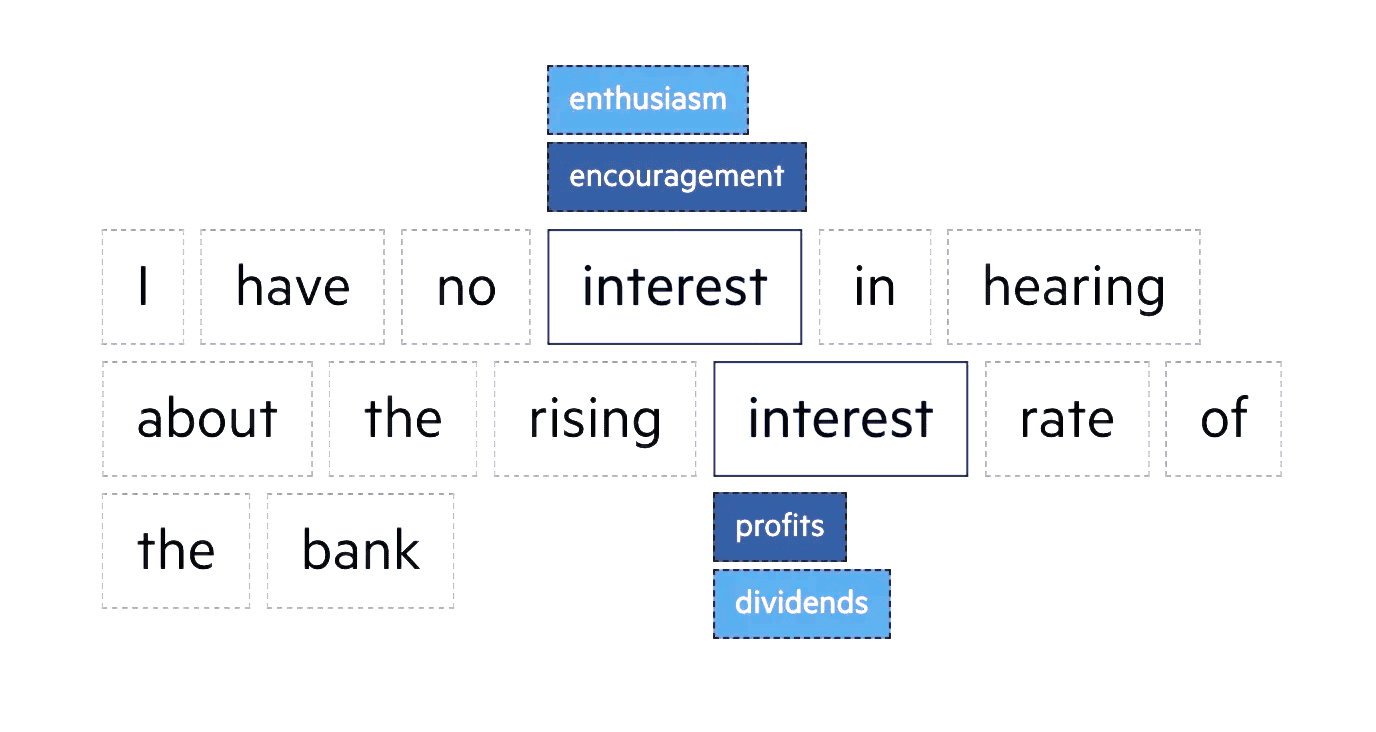

Context analysis

Understanding context is the key to an LLM. It does not just recognize individual words, but analyzes the entire context in which they are placed. To do this, he considers not only the word in question, but also the surrounding words. This allows it to make coherent sense of the text and generate relevant responses. For example, if a sentence contains the word “pressure,” the model will consider the context to determine whether it refers to physical, atmospheric, or psychological pressure. This ability to interpret is critical to avoid ambiguity and ensure accurate responses.

Vector representation

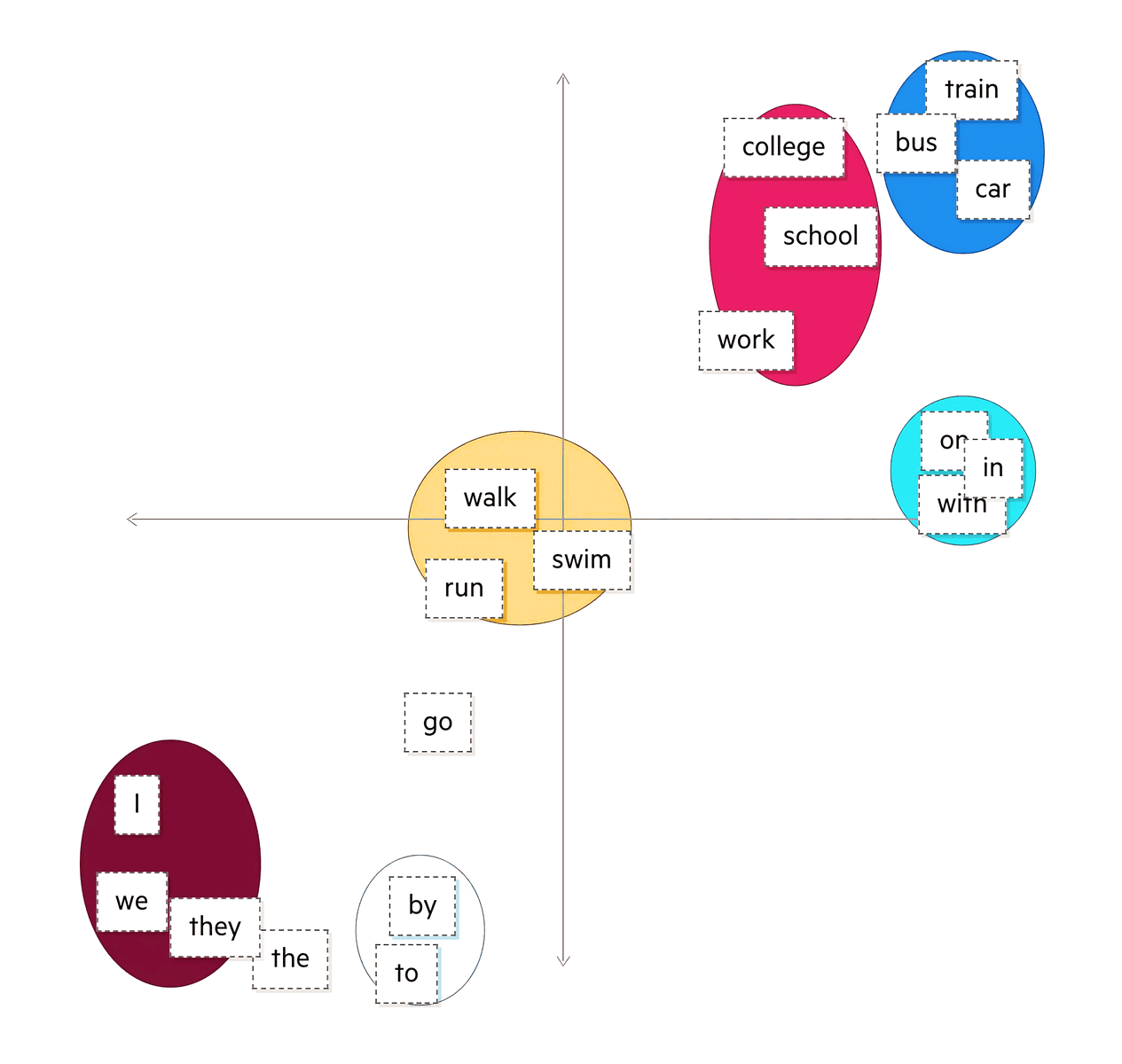

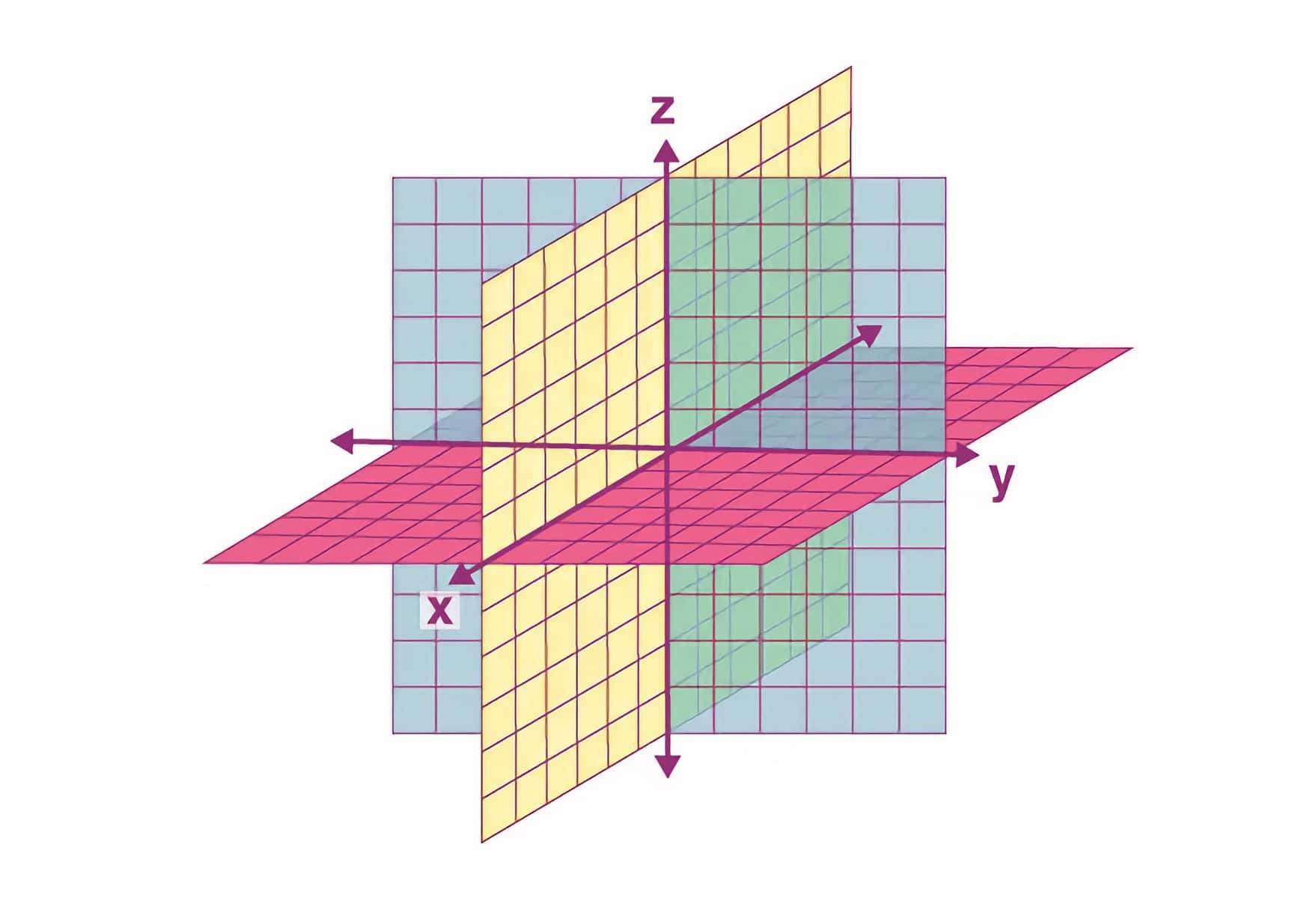

To perform this context analysis, an LLM uses a technique called “vector representation.” This means that each word is represented as a numerical vector in a multidimensional space. These vectors capture the semantic relationships between words.

For example, similar words such as “sky” and “earth” will have close vector representations in space because they are related concepts. This numerical representation of words allows the model to understand meaning mathematically.

Humans represent words with letters, C-A-T makes up the word “cat in English.” Language models represent words with numbers, these are called word vectors, and here is one way to represent “cat” as a vector:

[0,0074, 0,0030, -0,0105, 0,0742, 0,0765, -0,0011, 0,0265, 0,0106, 0,0191, 0,0038, -0,0468, -0,0212, 0,0091, 0,0030, -0,0563, -0,0396, -0,0998, -0,0796, …, 0,0002]

Words are complex, and language models store each specific word in a “word space”-a plane with more dimensions than the human brain can imagine. Imagine a three-dimensional plane, to get a sense of how many different points exist within that plane. Now add a fourth, fifth, sixth dimension.

Semantic-syntactic considerations

In addition to semantics, an LLM takes syntax into account. This means that it not only evaluates the meaning of words, but also how they are structured within the sentence. This enables him or her to generate grammatically correct and coherent texts.

The Importance of Attention

Another crucial element is the use of “attention” mechanisms. This allows the model to give more weight to some parts of the text than others, depending on the context. For example, in a complex question, an LLM will pay more attention to the most relevant parts to generate an accurate answer.

Limitations and challenges

LLMs can sometimes, generate unexpected or not entirely accurate answers. This can result from superficial understandings or ambiguous contexts. It is important to remember that despite their ability, LLMs do not have human intuition, at least for now.

LLMs are a new technology and we are still in the early days of its development. At the beginning of any new technology, there is a tendency to apply it to existing contexts, but with time totally new uses are always found that are completely unrelated to the past. Let us now look at four of the countless ways of using LLMs.

Making impossible problems possible

LLMs can take something that humans simply cannot do and make it feasible, for example, sequencing DNA. LLMs can translate these sequences into language problems that become solvable in this way for many other things where we simply do not “get it” in part because of the ability of LLMs to draw on millions of data points to come up with an ad hoc, non-prepackaged answer, something that even the most intelligent human being cannot do.

Making easy problems less frustrating

Language models can eliminate friction in matching supply and demand as in the used car market, as well as in other categories that require significant human input and information exchanges, such as service marketplaces like finding an electrician.

Vertical AI

This is the SaaS model that starts by identifying a pain point that needs to be solved for a specific user group in a specific marketplace; solve that pain point, then expand the product range to include other problems; finally become the main reference and one-stop store through which that person manages his or her daily life; add payments to control the flow of money and take a commission. Vertical AI is similar: become incredibly focused on serving a specific customer in a specific industry and use that specialization to deliver a product 10 times better. In AI, this means training a model on specific data that creates a feedback loop that leads to product improvement. Recently there have been AI applications for psychological help or that from a photo and description give you a diagnosis of your medical problem with a higher degree of accuracy than doctors (again because AI taps into millions of data that even the best doctor does not have access to).

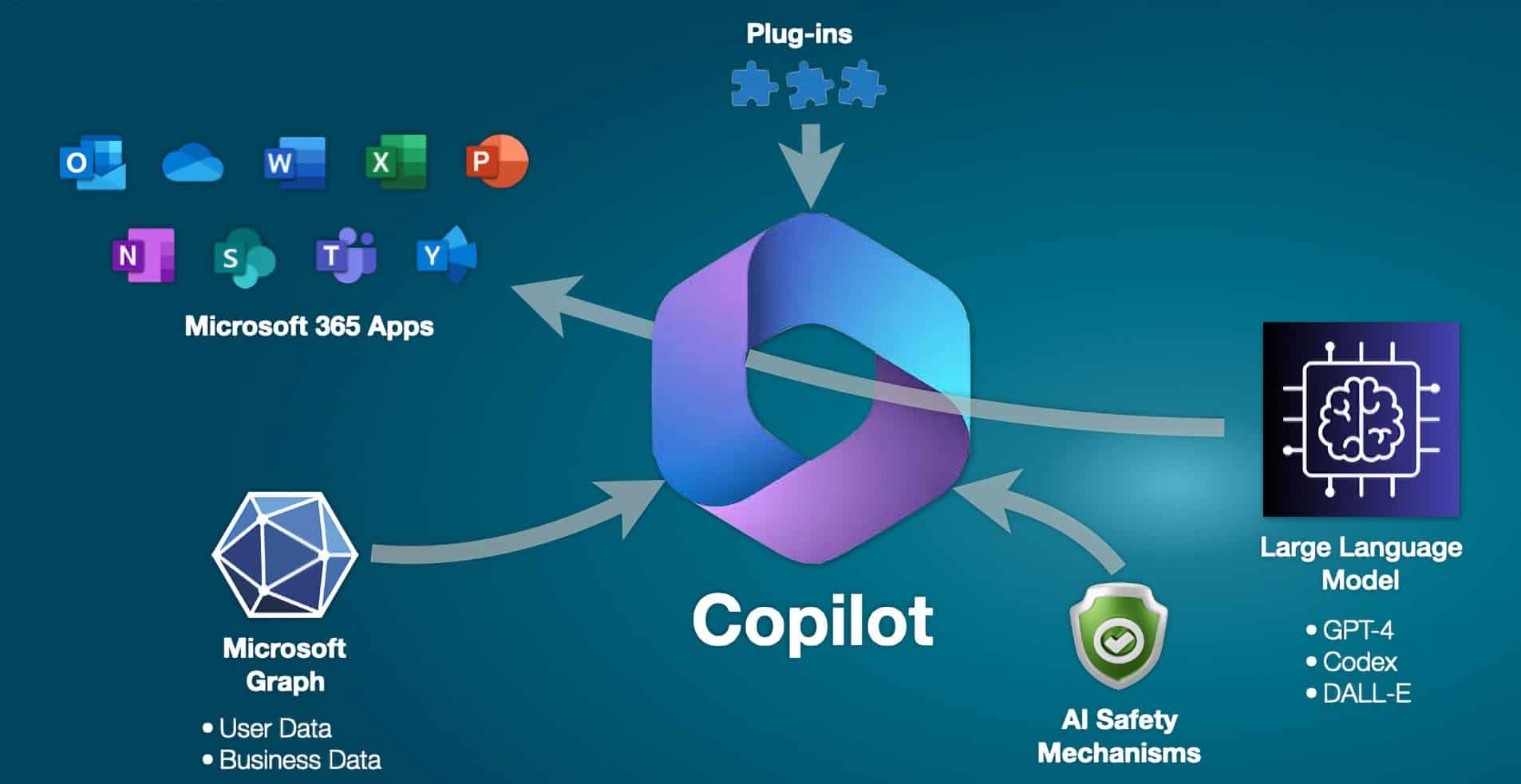

AI co-pilot

Everyone will have an AI copilot who will enhance our knowledge and creativity. Before AI directly replaces humans, it will empower them. GitHub Copilot, which helps software developers write code, is the first AI “copilot” product to publicly announce that it has surpassed $100 million in annual sales.

A recent Harvard Business School study measured how LLMs amplify consultants’ performance. In the HBS experiment, consultants randomly assigned to have access to GPT-4 completed 12.2 percent more tasks on average and worked 25.1 percent faster. Quality improved by 40%.

Microsoft announced that Windows will from now on be based on a copilot who will help us with any task we should be doing, from writing/responding to an email to creating an image for a presentation that will be set up automatically based on the description we have written. Google also announced that Bard will soon arrive on Android phones to assist you with many functions.

LLMs will soon be so present in our daily lives that they will become more and more invisible, we will always have a “guardian angel” with us who will talk to us (the next big step is that these LLMs will talk like real people), advise and help us on virtually anything. This opens up another topic here about the fact that we will become more and more lazy and less independent of technology, but this is not the context in which to delve into this nonetheless important issue.

At Ex Machina, we are always looking for new solutions to use in our projects to realize personalized solutions for companies and public bodies. If you want to learn more about our AI solutions, explore our website > https://exmachina.ch